A comprehensive journey through data collection, preprocessing, and feature engineering across multiple datasets containing information on software engineer salary.

Analysis of Software Engineer Salary

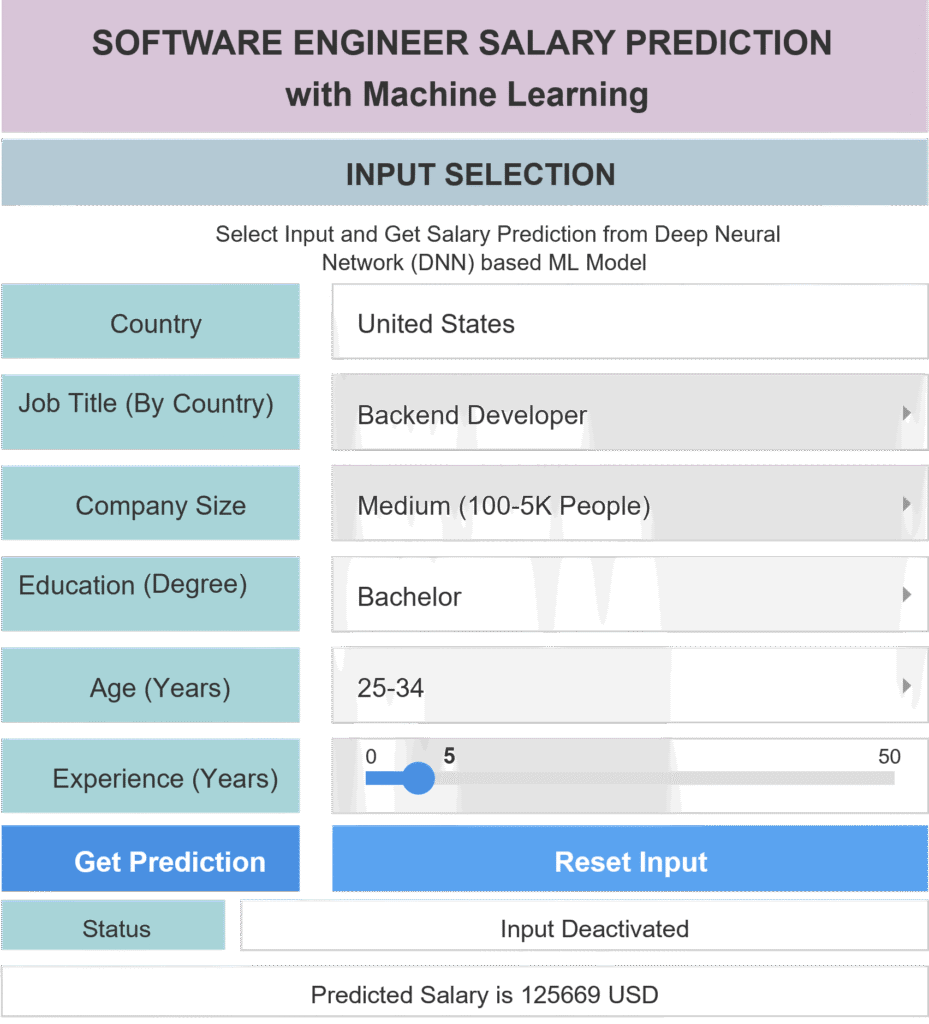

Collected and analyzed real-world survey data from 60,000+ professionals across 46 countries and 22 job-titles for an AI-powered Salary Prediction App.

Table of Contents

Toggle📋 Project Overview

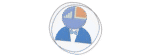

This project addresses one of the most critical questions in tech careers: What should my salary be?

Software engineer salaries vary dramatically based on multiple factors including location, company size, experience, education, and job specialization. This application leverages machine learning to provide data-driven salary predictions by analyzing these complex relationships using survey data from real software engineers worldwide.

Key Features of the App

- ⭐ Intelligent Salary Prediction: Deep neural network model trained on 60,000+ real salary data points

- ⭐ Interactive Data Visualization: Dynamic charts that update based on your selections

- ⭐ Comprehensive Analysis: Explore salary trends by education, experience, age, company size, and remote work status

- ⭐ Multi-dimensional Insights: Understand how different factors influence compensation in your specific country and role

- ⭐ Smart Input Validation: Country-specific job title filtering ensures reliable predictions

Introduction

Recently I embarked on an interesting project of building a comprehensive salary prediction model for software engineers worldwide. The goal? To understand what really drives compensation in tech and create a tool that could provide accurate salary estimates based on various factors like location, experience, education, and job role. This blog post is to summarize the core findings from that journey. I will demonstrate different aspects of the data-processing steps, starting from raw survey data collection, to preparing a clean, analysis-ready dataset. Along the way, I encountered challenges with inconsistent data formats and missing values. I also had to standardize correlated job titles to make the dataset clean and reliable for Machine Learning applications.

Key Discussion Points

- How to merge and harmonize multiple datasets with different schemas

- Advanced data cleaning techniques for real world survey data

- Strategic feature engineering for machine learning

- Best practices for handling categorical variables and outliers

Assembling Data Sources

I collected the raw data from two major data sources to create a comprehensive dataset spanning multiple years and job categories. One from the stack Overflow developer survey and the another is from aijobs.net. All data are collected for the years in between 2021 and 2023.

Loading the Data

The first step was loading all datasets and extracting relevant columns. Each source had its own schema, so alignment was crucial.

import pandas as pd

import numpy as np

# Load all datasets

data3 = pd.read_csv("data/SOD2023/survey_results_public.csv")

data2 = pd.read_csv("data/SOD2022/survey_results_public.csv")

data0 = pd.read_csv("data/AIJOB2023/salaries.csv")

# Extract relevant columns from Stack Overflow surveys

df3 = data3[["Country", "EdLevel", "Employment", "ConvertedCompYearly",

"RemoteWork", "DevType", "OrgSize", "WorkExp", "Age"]]

df2 = data2[["Country", "EdLevel", "Employment", "ConvertedCompYearly",

"RemoteWork", "DevType", "OrgSize", "WorkExp", "Age"]]

# Extract from AI Jobs dataset (different schema)

df0 = data0[["experience_level", "employment_type", "job_title",

"salary_in_usd", "remote_ratio", "company_location", "company_size"]]Notice how the AI Jobs dataset uses completely different column names. This is typical when working with multiple data sources. Our first task is to create a unified schema that all datasets can conform to.

Initial Preprocessing

Standardizing Column Names

Before any analysis could begin, I needed to unify column names across all datasets. Consistency is key when merging data.

# Rename salary columns to consistent name

df3 = df3.rename({"ConvertedCompYearly": "SALARY"}, axis=1)

df2 = df2.rename({"ConvertedCompYearly": "SALARY"}, axis=1)

df0 = df0.rename({"salary_in_usd": "SALARY"}, axis=1)

# Remove rows with missing salary data

df3 = df3[df3["SALARY"].notnull()]

df2 = df2[df2["SALARY"].notnull()]

df0 = df0[df0["SALARY"].notnull()]Filtering Employment Status

To ensure apples to apples comparisons, I focused exclusively on full-time employees. Part-time, contract, and other employment types have fundamentally different compensation structures. Therefore, they are not considered together with full-time employees. As the salary prediction Machine Learning model must learn to predict full-time salaries only in this case.

# Keep only full-time employees

df3 = df3[df3["Employment"] == "Employed, full-time"]

df2 = df2[df2["Employment"] == "Employed, full-time"]

df0 = df0[df0["employment_type"] == "FT"]

# Drop the employment column (now redundant)

df3 = df3.drop("Employment", axis=1)

df2 = df2.drop("Employment", axis=1)

df0 = df0.drop("employment_type", axis=1)Data Reduction After Filtering

- Initial datasets: ~150,000 total responses

- After removing null salaries: ~120,000 responses

- After filtering full-time only: ~90,000 responses

Feature Engineering

Remote Work Standardization

The Stack Overflow surveys used categorical text for remote work status, while the AI Jobs dataset used numeric ratios. I needed to harmonize these.

def map_remote_ratio(value):

"""Convert text-based remote work status to numeric ratio"""

if value == 'Remote':

return 100

elif value == 'In-person':

return 0

elif value == 'Hybrid (some remote, some in-person)':

return 50

else:

return 0 # Treat NaN as in-person

# Apply mapping to Stack Overflow data

df3['REMOTE RATIO'] = df3['RemoteWork'].apply(map_remote_ratio).astype(int)

df2['REMOTE RATIO'] = df2['RemoteWork'].apply(map_remote_ratio).astype(int)

# Drop original column and rename in AI dataset

df3 = df3.drop('RemoteWork', axis=1)

df2 = df2.drop('RemoteWork', axis=1)

df0 = df0.rename({"remote_ratio": "REMOTE RATIO"}, axis=1)Design Decision: I chose to represent remote work as a percentage (0, 50, 100) rather than categories because it better represents the continuous nature of work arrangements (by producing a consistent ratio of remote-work across all data points) and works better for many machine learning algorithms.

Company Size Categorization

Company size in the Stack Overflow surveys was granular (specific employee ranges), but I simplified it to three categories for consistency with the AI Jobs dataset.

def categorize_size(org_size):

"""Simplify company size into Large, Medium, Small"""

if org_size in ['10,000 or more employees', '5,000 to 9,999 employees']:

return 'L' # Large

elif org_size in ['100 to 499 employees', '500 to 999 employees',

'1,000 to 4,999 employees']:

return 'M' # Medium

else:

return 'S' # Small

df3['COMPANY SIZE'] = df3['OrgSize'].apply(categorize_size)

df2['COMPANY SIZE'] = df2['OrgSize'].apply(categorize_size)Caveat: Simplifying company size reduces granularity. In practice, there’s a significant difference between a 100-person startup and a 4,999-person company. This is a trade-off between model complexity and data consistency.

Country Code Standardization

One of the trickiest challenges was standardizing country names. Survey respondents aren’t consistent, and different surveys use different conventions.

import pycountry

# Dictionary for common country name variations

country_name_replacements = {

'United States of America': 'United States',

'United Kingdom of Great Britain and Northern Ireland': 'United Kingdom',

'Hong Kong (S.A.R.)': 'Hong Kong',

'Iran, Islamic Republic of...': 'Iran, Islamic Republic of',

'Venezuela, Bolivarian Republic of...': 'Venezuela, Bolivarian Republic of',

'The former Yugoslav Republic of Macedonia': 'North Macedonia',

'South Korea': 'Korea, Republic of',

'Czech Republic': 'Czechia',

# ... and many more

}

def get_country_code(country_name):

"""Convert country name to ISO 3166-1 alpha-2 code"""

try:

country = pycountry.countries.get(name=country_name)

return country.alpha_2

except AttributeError:

print(f'Error found for {country_name}')

return country_name

# Apply replacements then convert to codes

df3['CountryTemp'] = df3['Country'].replace(country_name_replacements)

df3['COUNTRY CODE'] = df3['CountryTemp'].apply(get_country_code)Why country codes? They’re standardized, compact, and internationally recognized. More importantly, they avoid ambiguity that comes with country name variations.

Experience Level Engineering

The AI Jobs dataset provided experience levels directly, but Stack Overflow only had years of experience. I created a mapping function

def convert_to_experience_level(work_exp, dataframe):

"""Map years of experience to standard experience levels"""

max_work_exp = np.max(dataframe['WorkExp'])

experience_ranges = {

'EN': (0, 3), # Entry-level / Junior

'MI': (3, 8), # Mid-level / Intermediate

'SE': (8, 15), # Senior-level / Expert

'EX': (15, max_work_exp + 1) # Executive-level / Director

}

for level, (lower, upper) in experience_ranges.items():

if lower <= work_exp < upper:

return level

return 'NA'

df3['EXP LEVEL'] = df3['WorkExp'].apply(

convert_to_experience_level, dataframe=df3

)| Experience Level | Years Range | Description |

|---|---|---|

| EN (Entry) | 0-3 years | Junior developers, recent graduates |

| MI (Mid) | 3-8 years | Intermediate developers with solid experience |

| SE (Senior) | 8-15 years | Senior engineers, technical leads |

| EX (Executive) | 15+ years | Staff engineers, principal engineers, architects |

Data Merging and Harmonizing

With all datasets standardized to common schemas, I could finally merge them. But first, a final round of column renaming to ensure perfect alignment.

# Final column standardization

df3_ = df3_.rename({

"DevType": "JOB TITLE",

"WorkExp": "WORK EXP",

"Age": "AGE",

"EdLevel": "EDUCATION"

}, axis=1)

# Similar for other dataframes...

# The grand merge

mdf = pd.concat([df0_, df2_, df3_], ignore_index=True)

print(f"Merged dataset shape: {mdf.shape}")Result: A unified dataset with consistent column names, standardized values, and over 90,000 salary records spanning multiple years, countries, and job types.

Mapping Education Levels

Education data needed simplification. Stack Overflow’s granular categories were reduced to four key levels.

education_mapping = {

'Bachelor's degree (B.A., B.S., B.Eng., etc.)': 'BD',

'Master's degree (M.A., M.S., M.Eng., MBA, etc.)': 'MD',

'Professional degree (JD, MD, Ph.D, Ed.D, etc.)': 'PD',

'Some college/university study without earning a degree': 'SU',

'Associate degree (A.A., A.S., etc.)': 'SU',

'Primary/elementary school': 'SU',

'Secondary school (e.g. American high school, etc.)': 'SU',

'Something else': 'NA',

}

mdf['EDUCATION'] = mdf['EDUCATION'].map(education_mapping).fillna('NA')Age Group Standardization

Age ranges were simplified to consistent brackets.

age_mapping = {

'25-34 years old': '25-34',

'35-44 years old': '35-44',

'18-24 years old': '18-24',

'45-54 years old': '45-54',

'55-64 years old)': '55-64',

'65 years or older': '65+',

'Under 18 years old': '-18',

'Prefer not to say': 'NA',

}

mdf['AGE'] = mdf['AGE'].map(age_mapping).fillna('NA')Job Title Normalization

Job titles were the wild west of this dataset. The same role could be described dozens of ways. Time for some aggressive text processing

Text Preprocessing

import string

def preprocess_text(text):

"""Clean and standardize job title text"""

try:

text = text.strip() # Remove whitespace

text = text.split(';', 1)[0] # Take first role if multiple listed

text = text.translate(str.maketrans('', '', string.punctuation))

text = text.upper() # Standardize case

return text

except Exception as e:

print(f"Error processing {text}: {e}")

return 'UNKNOWN'

mdf['JOB TITLE'] = mdf['JOB TITLE'].apply(preprocess_text)Why This Matters: Full-Stack Developer, Full Stack Developer, and fullstack developer are the same role but would be treated as different categories without normalization. This preprocessing ensures consistency.

Data point Filtering

I applied a crucial filter: only keep country-job combinations with at least 50 samples. This ensures statistical validity.

# Group by country and job title, filter groups with 50+ samples

dat = dat.groupby(['COUNTRY CODE', 'JOB TITLE']).filter(

lambda group: len(group) >= 50

)

print(f"After filtering: {dat.shape}")

print(f"Unique countries: {dat['COUNTRY CODE'].nunique()}")

print(f"Unique job titles: {dat['JOB TITLE'].nunique()}")Removing Noise Categories

Some job categories were too generic, unrelated to software engineering, or too ambiguous.

unusual_categories = [

'OTHER PLEASE SPECIFY',

'HARDWARE ENGINEER',

'EDUCATOR',

'STUDENT',

'MARKETING OR SALES PROFESSIONAL',

'SENIOR EXECUTIVE CSUITE VP ETC',

'SCIENTIST',

'BLOCKCHAIN',

'DESIGNER',

# ... etc

]

dat = dat[~dat['JOB TITLE'].isin(unusual_categories)]Job Title Consolidation

Many similar roles needed merging. For example, all these refer to the same position.

title_mapping = {

'DEVELOPER FULLSTACK': 'FULLSTACK DEVELOPER',

'DEVELOPER BACKEND': 'BACKEND DEVELOPER',

'DEVELOPER FRONTEND': 'FRONTEND DEVELOPER',

'DEVELOPER DESKTOP OR ENTERPRISE APPLICATIONS': 'DESKTOP APP DEVELOPER',

'DEVELOPER MOBILE': 'MOBILE APP DEVELOPER',

'DEVELOPER GAME OR GRAPHICS': 'GAME OR GRAPHICS DEVELOPER',

'ENGINEER SITE RELIABILITY': 'SITE RELIABILITY ENGINEER',

'DATA SCIENTIST OR MACHINE LEARNING SPECIALIST': 'DATA SCIENCE OR ML SPECIALIST',

'ML ENGINEER': 'MACHINE LEARNING ENGINEER',

# ... many more mappings

}

fdat = dat.copy()

fdat['JOB TITLE'] = fdat['JOB TITLE'].map(title_mapping).fillna(fdat['JOB TITLE'])Outlier Removal

Within each country-job combination, I removed salary outliers using percentile filtering.

def remove_outliers(group):

"""Remove extreme outliers using 5th and 95th percentiles"""

q1 = group['SALARY'].quantile(0.05) # 5th percentile

q3 = group['SALARY'].quantile(0.95) # 95th percentile

return group[(group['SALARY'] >= q1) & (group['SALARY'] <= q3)]

grouped = fdat.groupby(['COUNTRY CODE', 'JOB TITLE'])

fcdat = grouped.apply(remove_outliers)

fcdat.reset_index(drop=True, inplace=True)Statistical Note: I used the 5th and 95th percentiles rather than traditional IQR because tech salaries often have legitimate extreme values. This approach is more conservative while still removing clearly erroneous data.

Creating Job Categories

With dozens of individual job titles, I created higher-level categories to capture career paths and role types.

cat_mapping = {

# DevOps and Infrastructure

'SITE RELIABILITY ENGINEER': 'DEVOPS ROLES',

'SYSTEM ADMINISTRATOR': 'DEVOPS ROLES',

'DATABASE ADMINISTRATOR': 'DEVOPS ROLES',

'SECURITY PROFESSIONAL': 'DEVOPS ROLES',

'DEVOPS SPECIALIST': 'DEVOPS ROLES',

'CLOUD INFRASTRUCTURE ENGINEER': 'DEVOPS ROLES',

# AI/ML/Data Science

'MACHINE LEARNING ENGINEER': 'AI ML DS ROLES',

'DATA SCIENTIST': 'AI ML DS ROLES',

'DATA ENGINEER': 'AI ML DS ROLES',

'DATA ARCHITECT': 'AI ML DS ROLES',

'DATA ANALYST': 'AI ML DS ROLES',

# Management

'PROJECT MANAGER': 'MANAGERS',

'PRODUCT MANAGER': 'MANAGERS',

'ENGINEERING MANAGER': 'MANAGERS',

# Software Development

'FULLSTACK DEVELOPER': 'DEVELOPERS',

'BACKEND DEVELOPER': 'DEVELOPERS',

'FRONTEND DEVELOPER': 'DEVELOPERS',

'MOBILE APP DEVELOPER': 'DEVELOPERS',

'EMBEDDED SYSTEMS DEVELOPER': 'DEVELOPERS',

# ... etc

}

fcdata = fcdat.copy()

fcdata['JOB CATEGORY'] = fcdata['JOB TITLE'].map(cat_mapping).fillna(fcdata['JOB TITLE'])Why Categories? Job categories serve multiple purposes:

- Enable broader analysis across similar roles

- Provide features for machine learning models

- Help with missing value imputation

- Make results more interpretable for end users

Finally we have:

- 4 Major Categories

- 30+ Specific Roles

- 50+ Countries

Missing Value Handling

With 90,000+ records from multiple sources, missing values were inevitable. But I could not just drop them all or fill with global averages. I needed a smarter approach.

Context-Aware Imputation Strategy

My strategy was to impute missing values based on the most similar context available:

- Try to fill using mode from same Country + Job Category

- If still missing, use mode from same Country

- If still missing, use global mode

Education Imputation

Here the code converts NA strings to proper NaN values and then applies hierarchical imputation for missing education data. It groups records by country code and job category, filling gaps with the most common education level within each group. This approach assumes professionals in similar roles and regions share comparable educational backgrounds.

# Convert 'NA' strings to actual NaN values

fdata['EDUCATION'] = fdata['EDUCATION'].replace('NA', np.nan)

# Hierarchical imputation

fdata['EDUCATION'] = fdata.groupby(['COUNTRY CODE', 'JOB CATEGORY'])['EDUCATION'] \

.transform(lambda x: x.fillna(x.mode().iloc[0] if not x.mode().empty else np.nan))

# Check results

print(f"Missing education values: {fdata['EDUCATION'].isnull().sum()}")Age Group Imputation

Here the code standardizes missing age values by converting NA strings to NaN, then performs group-based imputation. Missing ages are replaced with the modal age within each country-job category combination. This strategy ensures imputed values reflect typical age distributions for similar positions in the same geographical context.

fdata['AGE'] = fdata['AGE'].replace('NA', np.nan)

fdata['AGE'] = fdata.groupby(['COUNTRY CODE', 'JOB CATEGORY'])['AGE'] \

.transform(lambda x: x.fillna(x.mode().iloc[0] if not x.mode().empty else np.nan))

print(f"Missing age values: {fdata['AGE'].isnull().sum()}")Experience Level Imputation

Here also the code handles missing experience levels by first converting NA strings to NaN values for consistency. It then uses hierarchical imputation grouped by country and job category, filling missing values with the mode of each group. The experience levels are approximated here based on job categories and regional employment patterns, providing contextually relevant estimates.

fdata['EXP LEVEL'] = fdata['EXP LEVEL'].replace('NA', np.nan)

fdata['EXP LEVEL'] = fdata.groupby(['COUNTRY CODE', 'JOB CATEGORY'])['EXP LEVEL'] \

.transform(lambda x: x.fillna(x.mode().iloc[0] if not x.mode().empty else np.nan))

print(f"Missing experience level values: {fdata['EXP LEVEL'].isnull().sum()}")Work Experience: A Special Case

experience_ranges = {

'EN': (0, 3), # Entry-level

'MI': (3, 8), # Mid-level

'SE': (8, 15), # Senior-level

'EX': (15, 25) # Executive-level

}

def randomize_average(lower, upper):

"""Generate a value near the midpoint with small random variation"""

midpoint = (lower + upper) / 2

variation = np.random.randint(-1, 2) # -1, 0, or 1

return int(midpoint + variation)

# Fill missing work experience based on experience level

fdata['WORK EXP'] = fdata.apply(

lambda row: randomize_average(*experience_ranges[row['EXP LEVEL']])

if pd.isnull(row['WORK EXP']) else row['WORK EXP'],

axis=1

)Design Choice: Rather than using exact midpoints, I add small random variations (-1, 0, +1 years). This prevents artificial clustering at specific values while maintaining realistic distributions.

Final Touches and Quality Control

Let us add a few modifications to make the dataset more consistent without outliers and short-forms.

Salary Range Filtering

I applied reasonable salary bounds based on domain knowledge of global tech compensation.

data = fdata.copy()

data = data[data["SALARY"] <= 200000] # Upper bound

data = data[data["SALARY"] >= 10000] # Lower bound (accounts for lower CoL countries)

print(f"Final dataset shape: {data.shape}")Final quality Check

dat = data.copy()

dat = dat.groupby(['COUNTRY CODE', 'JOB TITLE']).filter(

lambda group: len(group) >= 50

)

print(f"Final shape: {dat.shape}")

print(f"Final unique countries: {dat['COUNTRY CODE'].nunique()}")

print(f"Final unique job titles: {dat['JOB TITLE'].nunique()}")Human-Readable Labels

def get_country_name(country_code):

"""Convert ISO code back to country name"""

try:

country = pycountry.countries.get(alpha_2=country_code)

return country.name.upper()

except AttributeError:

return ''

dat['COUNTRY NAME'] = dat['COUNTRY CODE'].apply(get_country_name)

# Expand abbreviated values

replacements = {

'COMPANY SIZE': {'M': 'MEDIUM', 'L': 'LARGE', 'S': 'SMALL'},

'EDUCATION': {'BD': 'BACHELOR', 'MD': 'MASTER', 'PD': 'DOCTORAL', 'SU': 'UNDERGRAD'},

'REMOTE RATIO': {0: 'NOT REMOTE', 50: 'HYBRID', 100: 'FULL REMOTE'},

'EXP LEVEL': {

'MI': 'MID (4 TO 8)',

'SE': 'SENIOR (9 TO 15)',

'EN': 'ENTRY (0 TO 3)',

'EX': 'EXPERT (15+)'

}

}

for column, mapping in replacements.items():

dat[column] = dat[column].replace(mapping)

# Final column renaming for clarity

dat.rename(columns={

'COUNTRY NAME': 'COUNTRY',

'EXP LEVEL': 'EXPERIENCE (LEVEL)',

'AGE': 'AGE (YEARS)',

'WORK EXP': 'EXPERIENCE (YEARS)',

'REMOTE RATIO': 'REMOTE WORK'

}, inplace=True)Save the Results

After completing all processing activity, now it is time to save the cleared data (for using with ML models).

# Save the final processed dataset

dat.to_csv('processed_data.csv', index=False)

# Also save country code lookup table

country_lookup = dat[['COUNTRY', 'COUNTRY CODE']].drop_duplicates()

country_lookup.to_csv('country_code.csv', index=False)Final Dataset Statistics:

- Total Records: ~70,000+ cleaned salary entries

- Countries: 50+ countries with sufficient data

- Job Categories: 4 major categories, 30+ specific roles

- Features: 9 predictor variables

- Missing Values: <1% after intelligent imputation

- Data Quality: All country-job combinations have 50+ samples

Key Takeaways

Data Harmonization is 70% of the Work

The actual model training (not covered here) took a fraction of the time compared to cleaning and harmonizing the data. Real-world data is messy, inconsistent, and full of surprises.

Domain Knowledge is Crucial

Decisions like salary bounds ($10K-$200K), experience level ranges, and job category groupings all required deep understanding of the tech industry. Pure statistics would have missed important context.

Context-Aware Imputation

Filling missing education data with the mode from the same country and job category preserves important patterns. A Data Scientist in Germany likely has different education than a Mobile Developer in India.

Ensuring Enough Samples per category

By enforcing minimum sample sizes per group (50+ records), I sacrificed some rare country-job combinations but gained statistical reliability. Better to have accurate predictions for 50 countries than noisy predictions for 100.

Standardization Enables Scalability

Using ISO country codes, standardized experience levels, and consistent job categories makes it easy to add new data sources in the future. The infrastructure is now reusable.

Important Limitations:

- Survey data has selection bias (respondents may not represent entire population)

- Self-reported salaries may have inaccuracies

- Job title categorization involved subjective decisions

- Cost of living adjustments not included (comparing absolute USD values)

- Data is 1-3 years old and may not reflect current market conditions

Conclusion

What started as three disparate datasets with inconsistent schemas, messy text fields, and scattered missing values has been transformed into a clean, analysis-ready dataset ready for machine learning.

The journey involved strategic decisions at every step: how to standardize job titles, which records to keep, how to handle missing values, and how to create meaningful categories from chaos. Each decision was guided by domain knowledge, statistical principles, and the ultimate goal of building an accurate salary prediction model.

Next Steps: With this foundation, we can now build machine learning models to predict salaries, analyze compensation trends across countries and roles, and provide insights to job seekers and employers alike.

Data Sources: Stack Overflow Developer Surveys (2021-2023) & AI Jobs Dataset (2023)

💬 Feedback & Support

Loved the app? Have suggestions? Found a bug?

- Blog: analyticalman.com

- Live App: app.analyticalman.com/salary

- Issues: Open a GitHub issue

- Contact: analyticalman.com

Acknowledgments

- Stack Overflow for their comprehensive annual developer survey

- AIJobs.net for providing salary data for Machine Learning Engineers

- The open-source community for amazing ML libraries and tools

If this project helped you, consider giving it a ⭐ on GitHub!

Appendix

All input features along with their unique values are listed below.